O código passado pelo professor nessa aula não funciona. Já configurei o ambiente como indicado, tento rodar o código e aparece o seguinte erro, que não consegui identificar do que se trata. O VS Code aponta um problema na linha de código

modelo = ChatOpenAI(

model="gpt-3.5-turbo",

temperature=0.5,

api_key=api_key ERRO

)

Abaixo, segue mensagem de erro:

Traceback (most recent call last):

File "d:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\main2.py", line 20, in

resposta = modelo.invoke(prompt)

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\langchain_core\language_models\chat_models.py", line 471, in invoke

self.generate_prompt(

~~~~~~~~~~~~~~~~~~~~^

[self._convert_input(input)],

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

...<6 lines>...

**kwargs,

^^^^^^^^^

).generations[0][0],

^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\langchain_core\language_models\chat_models.py", line 1749, in generate_prompt

return self.generate(prompt_messages, stop=stop, callbacks=callbacks, **kwargs)

~~~~~~~~~~~~~^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\langchain_core\language_models\chat_models.py", line 1556, in generate

self._generate_with_cache(

~~~~~~~~~~~~~~~~~~~~~~~~~^

m,

^^

...<2 lines>...

**kwargs,

^^^^^^^^^

)

^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\langchain_core\language_models\chat_models.py", line 1896, in _generate_with_cache

result = self._generate(

messages, stop=stop, run_manager=run_manager, **kwargs

)

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\langchain_openai\chat_models\base.py", line 1655, in _generate

_handle_openai_api_error(e)

~~~~~~~~~~~~~~~~~~~~~~~~^^^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\langchain_openai\chat_models\base.py", line 1650, in _generate

raw_response = self.client.with_raw_response.create(**payload)

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\openai_legacy_response.py", line 367, in wrapped

return cast(LegacyAPIResponse[R], func(*args, **kwargs))

~~~~^^^^^^^^^^^^^^^^^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\openai_utils_utils.py", line 287, in wrapper

return func(*args, **kwargs)

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\openai\resources\chat\completions\completions.py", line 1211, in create

return self._post(

~~~~~~~~~~^

"/chat/completions",

^^^^^^^^^^^^^^^^^^^^

...<47 lines>...

stream_cls=Stream[ChatCompletionChunk],

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

)

^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\openai_base_client.py", line 1314, in post

return cast(ResponseT, self.request(cast_to, opts, stream=stream, stream_cls=stream_cls))

~~~~~~~~~~~~^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\Documentos\VS Code\Projects\4741-LangChain-e-Python-criando-ferramentas-com-a-LLM-OpenAI-main\langchain\Lib\site-packages\openai_base_client.py", line 1087, in request

raise self._make_status_error_from_response(err.response) from None

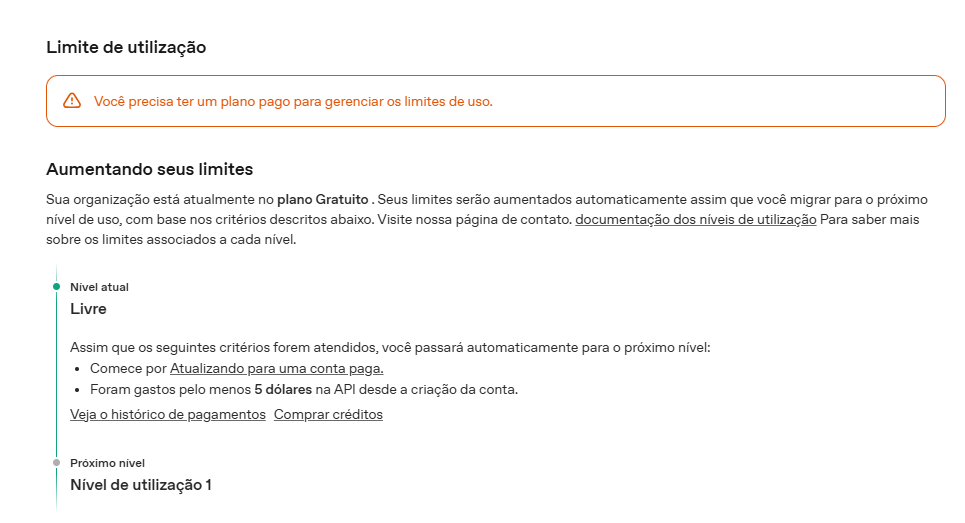

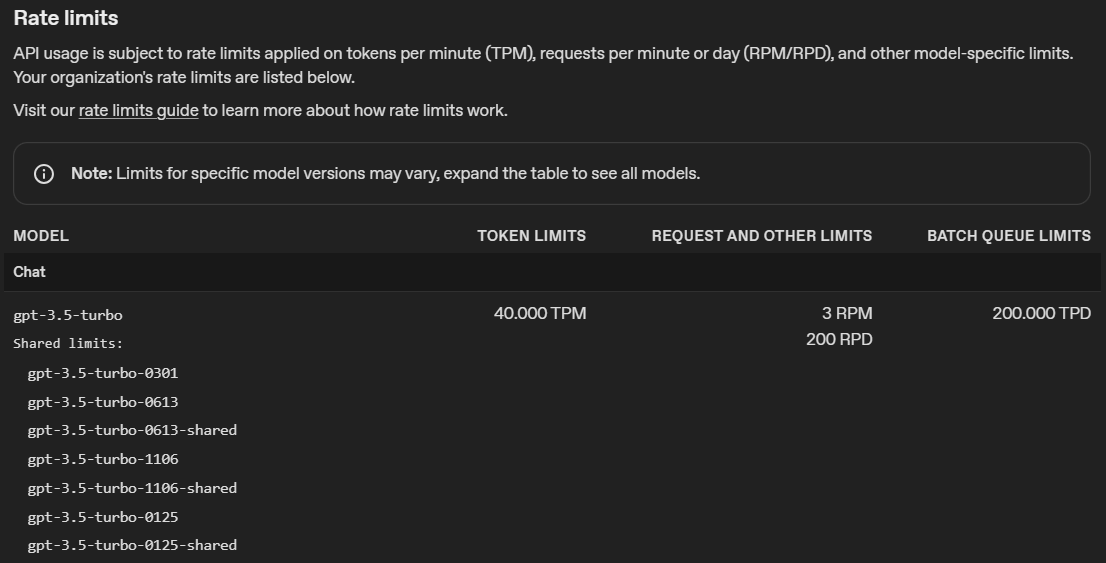

openai.RateLimitError: Error code: 429 - {'error': {'message': 'You exceeded your current quota, please check your plan and billing details. For more information on this error, read the docs: https://platform.openai.com/docs/guides/error-codes/api-errors.', 'type': 'insufficient_quota', 'param': None, 'code': 'insufficient_quota'}}